|

Alereon posted:SeaMicro does the same for Atoms Seamicro tried pretty hard to sell to my company before they got acquired by amd. When we got them to actually run real tests on their systems at best, in best case benchmarks, their atom based servers performed less than half as well per dollar or watt. This is hadoop compared to normal whitebox compute nodes (8 nehalem cores / 24 gigs of ram, gige interconnected). I'm not convinced you could find any real world workload that would actually save you money, rackspace, power, or whatever your most important thing to save by using Atom cpus.

|

|

|

|

|

| # ¿ Apr 26, 2024 09:12 |

|

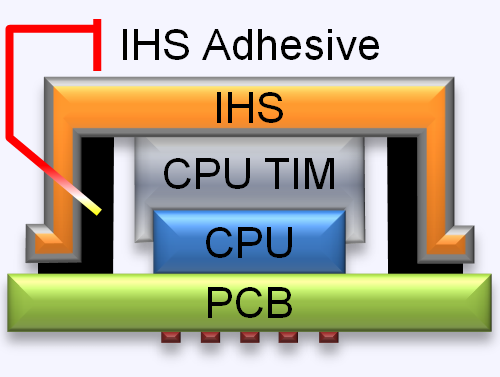

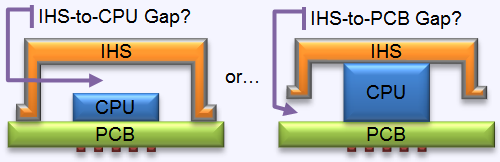

Shaocaholica posted:You should read this: drat this looks cool and like something I want to try. I don't even overclock. And my i5-2500k is really just fine so getting a whole new mobo + cpu is unnecessary at best. Can someone assure me that this makes no difference at stock clocks and that I won't be able to run a giant fuckoff tower heatsink fanless by doing this?

|

|

|

|

KennyG posted:This brings up an interesting (and often overlooked) thorn in Intel's side. It's no secret that about 8-10 years ago, Intel realized they were approaching a diminishing return problem with processor efficiency on a per-core basis. Obviously, they went multi-core. The problem is that a lot of the licensing is making you pay dearly for the privilege. In the enterprise space, the vendors who licensed based on ability usually did it by processor count/architecture (see Oracle). With the proliferation of multi-core chips, most vendors have stayed with a core definition of processor rather than the socket definition that most people think of as a processor. As core count sky-rockets in the next 10-20 years in a search to find more computing power, the software companies licensing methodology present a serious challenge to Intel's adoption rate and their bottom line. Holy gently caress I'll remember that number everytime I get angry at postgresql. Dual socket Ivy Bridge Xeons should be very very fast by all indications. Hopefully as fast as the e3-12xx v2 Ivy Bridge chips that are already available (in single socket) in four core variants. I am however a bit skeptical that dual socket Haswell Xeons will be out in only a year, given how delayed Ivy Bridge Xeons are.

|

|

|

|

Combat Pretzel posted:Researching my future NAS some more, it seems like I can get an ECC capable mini-ITX mainboard and an Xeon E3-1220V3 for just a few Because a lovely processor will obviously let you leverage lower pow... gently caress I can't even joke about this. You most likely don't ever want an atom cpu if you have a choice. I may as well ask here before I hit the linux thread, does anyone know a way in linux, on the cli to get the current cpu turbo clock / power state? I'm trying to figure out how hard a supposedly cpu bound application is hitting our servers, but I don't know how fast the cpu's are running (eventually I will cacti or snmp this). Ends up intel e5-26xx frequency optimized chips are expensive, so I don't want to switch to those if it won't make a difference. Luckily the Haswell version of the good old e5-2620 chip bumps base and turbo clock a fair amount for no additional cost, so I am hoping to squeeze by on that.

|

|

|

|

syzygy86 posted:Information on each core, including its current clock speed, can be grabbed from /proc/cpuinfo. Oops, guess I should have actually checked that, I assumed it was informational or set at boot or something: cat /proc/cpuinfo |grep "cpu MHz" cpu MHz : 3401.000 cpu MHz : 1600.000 cpu MHz : 3401.000 Not sure why/how HT factors into this, I'm usually seeing one logical core of eight reporting 1600 or 2100 MHz on a four core chip (Intel(R) Xeon(R) CPU E3-1240 V2 @ 3.40GHz) e: clarified, this is from a single cat /proc/cpuinfo Aquila fucked around with this message at 05:18 on Sep 10, 2014 |

|

|

|

Tab8715 posted:If I'm looking at the specifications correctly, couldn't a single P3700 potentially replace some SANs? I'm a little storage illiterate... Yes, I am hoping replace a $300k SAN with ~10 1.6TB P3700's. Based on Intel's track record with SSD's however I expect them to be 6 months late to market, and the largest capacity to never actually be sold. Likewise I feel dubious the P3500 will ever appear in sufficient quantity to meet corporate demand, let alone enthusiast. e: quote:Maybe in terms of IOPS, but some of our SANs are hundreds of terabytes. There is a balance to be had between IO, capacity, redundancy, and cost. SSD hasn't quite gotten there for most enterprises when it comes to capacity and cost compared to spinning media. Not a derail in my opinion. You're certainly right these won't replace all sans, but they will significantly shake up the ultra high performance side of things (which is what my san is for).

|

|

|

|

KillHour posted:When the hell are the P3500's coming out? They hit my price/performance sweet spot, and I can finally stop using workstation PCIe SSDs for my servers. Probably never. Intel is very bad about actually bringing enterprise ssd's to market, and demand for the P3600 and P3700 is phenomenal.

|

|

|

|

DDR4 prices on V3 Xeons holy poo poo. I may have to stick with V2 chips. Anyone know the clock for clock performance differences between V2 and V3 E5-26xx chips (assuming same clock/cores)?

|

|

|

|

Shaocaholica posted:Maybe its just me but does all consumer UEFI look the same? Like they took the intel code and slapped their own superficial winamp skin on it? Not from my experience (Poweredge 720), just a slow rear end wrapper around a boring but nominally functional bios and related crap. Slow boots and occasional whole system refusing to post if you may too many configuration changes at once.

|

|

|

|

Grundulum posted:My work machine has an i7-3930k in it; 6 physical cores with two-way hyperthreading, so 12 logical cores. When I try to run jobs that use more than one core, I get the distinct impression that I'm being throttled due to thermal considerations. That leads me to two questions: Check the bios (especially if it's an actual workstation type system from a big vendor like Dell or HP) for performance and thermal profiles. Just in case, if it's running linux there are some grub parameters you can set as well, including one which can steal 30% of your performance if its used with hyperthreading. Also consider something like realtemp or another monitoring tool. Realtemp shows current clockspeed/multiplier along with temps for each core.

|

|

|

|

s.i.r.e. posted:Jesus that's embarrassing so much for trusting my friends; welp, so now the new issue is what's a better cooler for my rig since my case will only accept a low profile? Or should I just say gently caress it and build in a case that accepts a large profile heatsink? I use a NH-L12 and it never has any trouble keeping either a 4670 or 2500K cool in a somewhat confined almost silent case. It looks like the NH-L9i is way smaller, but I'm still surprised it's working that badly, Noctua usually makes top quality stuff. In my main desktop system I was long confined to 92mm tower coolers and when I went to a case that allows 120mm coolers the difference was huge, it's not just that there's at least 70% more surface area, all the new flagship products are 120mm (or bigger) so that's where you have the best chance of finding a good design. e: Here is SPCR finding the NH-L9i completely sufficient for a 95W TDP chip disclaimer: personally I love Noctua heatsinks for being so quiet. Aquila fucked around with this message at 19:03 on Nov 23, 2015 |

|

|

|

canyoneer posted:So I've been planning on replacing my dinosaur media PC with a skylake NUC, but I found this in an amazon review. Not heard about it, but I have a Skylake nuc running ubuntu 14 desktop and it's been mostly great. No sign of MCE's in syslog. System did hardlock the first night with nothing of note in the logs, but has been solid since then (I updated the bios to current from original release the day after it hardlocked). Overall I'm really happy with this NUC. Aquila fucked around with this message at 17:23 on Apr 8, 2016 |

|

|

|

SuperDucky posted:We're having some, uh, interesting stability issues with Broadwell-EP on boards that worked just fine on Haswell. AMI and Intel are stumped. Uh Oh, and Dell just "upgraded" our most recent order to v4 xeons for "free".

|

|

|

|

Two mysterious kernel panics with our new v4 Xeons (E5-2640 v4) in just a month so far, a little bit worrisome. First one was extra special:code:I mention all this just because I recall someone in this thread complaining about problems with v4 xeons, but haven't seen too many complaints googling around the internet at large. Overall of course these new chips are awesome and are giving us 20 cores per hadoop node for the cost and power of 12 not too long ago. Maybe less power

|

|

|

|

|

| # ¿ Apr 26, 2024 09:12 |

|

ZobarStyl posted:Has there been any details about the form factor or compatibility of Optane? I really don't want to sidegrade from Skylake to Kaby Lake for storage alone. I talked to people at conferences who've used both the DIMM form factor and regular nvme pcie versions of Optane/3dxpoint. I strongly expect all optane devices to be big data center only for at least a year. Hell remember that even the original P3700 devices were nearly impossible for smaller shops to get for six months+ I'm very interested in getting the DIMM stuff into the hands of all the db developers (sql, kvp, etc) as it's a sea change to have all your ram be non volatile and to have 10x as much of it. From what I've seen so far no one (people, operating systems, kernels, db software) has any inkling of what to do with it.

|

|

|

more than the four core Avoton. So why exactly would I want that Atom CPU?

more than the four core Avoton. So why exactly would I want that Atom CPU?