|

EoRaptor posted:the CPU cache control hardware won't be optimized for it, so it may not yield as much benefit as it could. This makes no sense whatsoever.

|

|

|

|

|

| # ? Apr 28, 2024 18:49 |

Agreed posted:When not in use, bet your sass it does! Ask anyone with a 2600K/2700K in a P67 about the really cool core. I've got arguably the world's best air heat sink bolted to my 2600K and there's still one core that is consistently, under load, solidly 7-10�C cooler than others. I just want to be clear, are you saying that a hypothetical intel processor without integrated graphics would run hotter than one with integrated graphics that wasn't being used all other factors being equal?

|

|

|

|

|

Ignoarints posted:I just want to be clear, are you saying that a hypothetical intel processor without integrated graphics would run hotter than one with integrated graphics that wasn't being used all other factors being equal?

|

|

|

Alereon posted:This is a true statement. Thermal density is measured in watts per square mm, lower area with the same heat means higher thermal density, and higher thermal density with the same resistance (heatsink) means a hotter CPU. Yes okay that makes sense, that was one of the factors I mentioned earlier (if they actually made the die smaller it would indeed be hotter).

|

|

|

|

|

BobHoward posted:What relevance do those named features have to the average overclocking enthusiast? Oh, not so much, it's just it seems remarkably petty to eliminate features that are no doubt still available, just locked out.

|

|

|

|

BobHoward posted:

At the end of the day though it's all about cash. Apple has it and Intel knows it so they can work out better deals because they know Apple can pay. Other OEMs aren't quite that endowed.

|

|

|

|

Ignoarints posted:I think whatever physical space it uses would get saturated by heat pretty much instantly. Now if it were being used it'd just create more heat, and if the die was actually smaller I'm sure heat would be more concentrated per *crazy unit of measurement*, but I don't even really mean that. I wondered if leaving it out would leave "room" for design to make the processor gerbils to run better. But I kind of doubt its something as simple as that. It's significant enough that Xeon's enable higher turbo frequencies when cores are disabled. I don't know if consumer chips do the same.

|

|

|

|

I'm still rocking a 2500K for gaming so do I upgrade to the Haswell refresh or wait for Broadwell?

|

|

|

|

Henrik Zetterberg posted:This makes no sense whatsoever. If it's there just for the iGPU, then my statement is false. However, if the CPU can access it as a L4 cache, then you are relying on the cache control logic to correctly decide what data to keep in that cache, what to flush, what to precache, and to keep the cache coherent among all the threads that could access it. This is a very specialized bit of logic, and is tailored very specifically for the cache size that is on the CPU. It can certainly use a larger cache, but the performance improvement won't be as great as if that logic had been built for the larger cache pool from the start.

|

|

|

|

It's a full L4 cache.

|

|

|

|

I really can't fathom what unimaginable havoc a 8MB L3's control logic could inflict on a 128MB L4 acting as a victim cache, but "Intel built an entire new memory, packaging, and on-die communication scheme and forgot to tell the core design team for a few years" is stupid enough that it's not worth getting into the technical side.

|

|

|

|

spasticColon posted:I'm still rocking a 2500K for gaming so do I upgrade to the Haswell refresh or wait for Broadwell? I think if you feel like you could wait for Broadwell, then you probably should.

|

|

|

|

spasticColon posted:I'm still rocking a 2500K for gaming so do I upgrade to the Haswell refresh or wait for Broadwell? This is literally me. loving hell, I guess I'm not building a new PC until 2015. Dammit Intel. At least it gives Nvidia extra time with Maxwell I guess. Gonna pair up a i5-5500k with a gtx970

|

|

|

|

spasticColon posted:I'm still rocking a 2500K for gaming so do I upgrade to the Haswell refresh or wait for Broadwell? There's still no compelling reason to upgrade if your main purpose is gaming. I have the same CPU and the money set aside to do a new build but there's just no benefit to doing it right now.

|

|

|

|

I have a 2500k and gtx 680 and have zero reason to upgrade anytime soon. This is the longest I've gone without wanting to upgrade, and I've had more disposable income than ever before. I'll be honest and say that Z99 and DDR4 sounds cool, but not with the insane price tag I'm sure will come with it.

|

|

|

|

Do we know if X99 will only support ddr4, or will we be getting a mixture of ddr3 and ddr4 mobos like we did when ddr3 first came out?

|

|

|

|

The Lord Bude posted:Do we know if X99 will only support ddr4, or will we be getting a mixture of ddr3 and ddr4 mobos like we did when ddr3 first came out?

|

|

|

|

Does an i7 4770k match up particularly well with a certain kind of motherboard at or below a $200 price tag?

|

|

|

|

Alereon posted:DDR4 only, it's a completely different technology so there is no backwards compatibility. Notably, boards can only have one slot per channel, so X99 boards will have four slots that are all populated. Upgrading RAM involves replacing your existing DIMMs with new, larger ones. If the price was reasonable (say no more than 200 more than a 4770K + mobo) I'd actually consider an entry level haswell-E setup, assuming the performance in metrics I care about was worth it - but I'm dead set on going mITX, and I'm not aware of any mITX socket 2011 mobo on the market, so I doubt that situation would change much with Haswell-E.

|

|

|

|

Do we know anything at all about Z97 except that it won't support SATA Express?

|

|

|

|

JawnV6 posted:I really can't fathom what unimaginable havoc a 8MB L3's control logic could inflict on a 128MB L4 acting as a victim cache, but "Intel built an entire new memory, packaging, and on-die communication scheme and forgot to tell the core design team for a few years" is stupid enough that it's not worth getting into the technical side. Mods please change jawns title to a cream reference tia 128mb l4 is gonna own own own for data crunching

|

|

|

|

Yea, I can't see a good reason to upgrade my i5-2500k. 99% of the time I'm GPU or limited by my storage. With SATA Express not being included with Broadwell, what's the point in upgrading? I'm waiting for Skylake.

|

|

|

|

Tab8715 posted:Yea, I can't see a good reason to upgrade my i5-2500k. 99% of the time I'm GPU or limited by my storage. Basically if you are pushing your CPU to 100% for things like scientific data crunching, photo manipulation/importing/exporting (like you were a pro wedding photographer doing huge bulk stuff all day), video encoding, I can see the need, but I'm hoping to be able to use this i5-4series for a long-rear end time. Even the 2series stuff is just barely worse, I'd have to be super worried about saving every precious minute of my day to NEED to upgrade to a Haswell. It's pretty awesome.

|

|

|

|

Black Dynamite posted:Does an i7 4770k match up particularly well with a certain kind of motherboard at or below a $200 price tag? atomicthumbs posted:Do we know anything at all about Z97 except that it won't support SATA Express?

|

|

|

|

HalloKitty posted:Oh, not so much, it's just it seems remarkably petty to eliminate features that are no doubt still available, just locked out. There are no "free" features in this world. If they believed there was enough of a market, they'd create a new SKU and have you pay more for that set of features. Until then it's zippy-zap with the laser.

|

|

|

|

Has Intel released any information about their roadmap post-Skymont? They're getting close to the end, there...

|

|

|

|

canyoneer posted:There are no "free" features in this world. You're wrong on... pretty much everything here. I've outlined before why I think particular features aren't enabled on the -k series, whipping up a new SKU is nontrivial, and there aren't any lasers involved in the process.

|

|

|

|

canyoneer posted:There are no "free" features in this world.

|

|

|

|

Alereon posted:While a question for the Parts Picking Megathread, the correct answer is the Asus ROG MAXIMUS VI HERO for $199. These boards have unmatched quality from the integrated audio, great features, and they don't skimp on build and component quality like Gigabyte boards. This is what I'd be building a system around if I wasn't waiting for June. Thanks. Sorry for posting in the wrong thread. Is there something new being released in June?

|

|

|

|

Black Dynamite posted:Thanks. Sorry for posting in the wrong thread. Is there something new being released in June?

|

|

|

|

Is it weird that I'm super excited for the dual-core K processor? Something about a super cheap, heavy overclocker that can burn through single-threaded apps is really appealing.

|

|

|

|

I suddenly have this urge to take out a loan this summer. Well...after reviews come up, anyways.

|

|

|

|

The K-SKU Broadwell CPU with the Iris Pro graphics makes sense when you remember how Sandy Bridge launched. All the Sandy parts had HD 2000 except for the mobile parts... and the K SKUs. If you needed HD 3000 on a desktop, it was a reason to buy a K over a similarly-priced non-K plus a cheapo discrete GPU to get similar oomph in the end.

|

|

|

Agreed posted:When not in use, bet your sass it does! Ask anyone with a 2600K/2700K in a P67 about the really cool core. I've got arguably the world's best air heat sink bolted to my 2600K and there's still one core that is consistently, under load, solidly 7-10�C cooler than others. Hate to bring this back up, although I'm still not convinced something so small could be considered a heat sink next to something so hot for more than say a microsecond, I am positive that an inactive portion of the cpu itself that simply isn't producing heat would obviously make the whole chip cooler than if it was. And in the hypothetical chip with no integrated graphics, if it were smaller because of it, would be hotter. So in effect it sort of acts like a heat sink. If it were the same size though I don't think there would be in difference at all, except cost, and then that just goes back into "well its probably not that simple". Anyways... splitting hairs. I just really wanted to say I did actually have a core that was cooler than the others (#4) by almost ten degrees sometimes under load. After looking this up it seemed common enough. However after delidding and redoing TIM, all the cores evened out.

|

|

|

|

|

Silicon is a decent thermal conductor. Not as good as aluminum or copper by any means, but cold silicon next to a hot circuit does help conduct heat away from it and into the heatsink.

|

|

|

|

Ignoarints posted:Hate to bring this back up, although I'm still not convinced something so small could be considered a heat sink next to something so hot for more than say a microsecond, I am positive that an inactive portion of the cpu itself that simply isn't producing heat would obviously make the whole chip cooler than if it was. And in the hypothetical chip with no integrated graphics, if it were smaller because of it, would be hotter. So in effect it sort of acts like a heat sink. If it were the same size though I don't think there would be in difference at all, except cost, and then that just goes back into "well its probably not that simple". Anyways... splitting hairs. The costs for both chips would in fact be higher!

|

|

|

|

Is any of the Xeon E3 12xx v3 processors going to get a Haswell refresh or is it reserved for the desktop line? I'm wondering because I'd like to get one and don't want to wait for the Broadwell release and consequently needed time for the price to drop for sane consumers to hit it.

|

|

|

|

Ignoarints posted:Hate to bring this back up, although I'm still not convinced something so small could be considered a heat sink next to something so hot for more than say a microsecond, I am positive that an inactive portion of the cpu itself that simply isn't producing heat would obviously make the whole chip cooler than if it was. I was trying to find the processor manual where they explicitly say that the reason they allow higher max turbo frequencies on Xeons with cores disabled* was because of thermal effects, but intel's site is a mess and I don't really feel like downloading 200 page PDF's on my connection. A disabled graphics core would work on the exact same principal. (*Disabled as in, if you have an 8-core Xeon processor almost all server boards will let you run it in 1/2/4/8 core mode; mainly because of per-core licensing restrictions on some enterprise software)

|

|

|

|

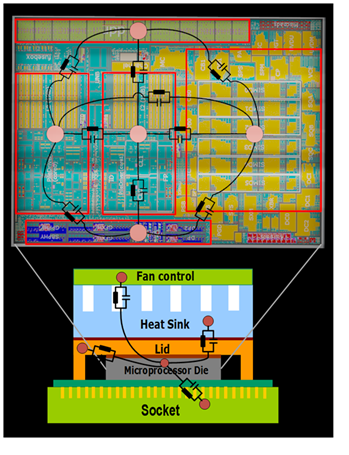

Chuu posted:I was trying to find the processor manual where they explicitly say that the reason they allow higher max turbo frequencies on Xeons with cores disabled* was because of thermal effects, but intel's site is a mess and I don't really feel like downloading 200 page PDF's on my connection. A disabled graphics core would work on the exact same principal. In particular:  You can model the power generating & heat sinking parts of a processor as an RC network, where idle silicon (or parts of the chip that are generating less heat) can sink power away from hot parts. This helps mitigate (but does not eliminate) local hotspots and allows for more heat to flow to the lid and heatsink due to greater surface area. Intel's Hot Chips talk about Sandy Bridge's power management goes into some details about this too, though doesn't directly describe how the cores heatsink one another.

|

|

|

|

|

| # ? Apr 28, 2024 18:49 |

Chuu posted:I was trying to find the processor manual where they explicitly say that the reason they allow higher max turbo frequencies on Xeons with cores disabled* was because of thermal effects, but intel's site is a mess and I don't really feel like downloading 200 page PDF's on my connection. A disabled graphics core would work on the exact same principal. Well, if you disable a core that was otherwise running you will reduce the heat. But the heat reduction is from something no longer creating heat, rather than being a heatsink. But I'm really, really really splitting hairs now and will just simply concede now lol. This all just started from me disagreeing with the justification of the presence a IGP as a "heatsink" when disabled

|

|

|

|

Failed Sega Accessory Ahoy!

Failed Sega Accessory Ahoy!