|

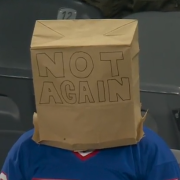

WarLocke posted:Well, I said 'absolutely sure'. Every other Guest so far has been like "Look at that loving poo poo man" and she was just so into it. Clementine

|

|

|

|

|

| # ? Apr 27, 2024 16:53 |

|

VendaGoat posted:Before I ask my question, know that I understand your position. It doesn't really seem important to the scope of the story. Or at least to the main themes. We don't need to know what price of tea in future China is to explore sentience, and outside world building that doesn't relate to that question is wasted script time. WarLocke posted:Yeah, I don't think Bernard is actually a robot, I just think the show is going out of the way to imply he's one as a red herring. It's quickly become my favorite game to play while watching this show. "Is this new character a guest or a host? ". The only consistent tell I've seen so far is if a known host is super nice to a stranger and let's them tag along to stuff.

|

|

|

|

Phanatic posted:On what basis is that a fact? Since the brain is a neural network with non-linear dynamics, even slight variations in the edge weights or the amount of edges can produce totally unpredictable results in the output and a simplified approximation of the network could never accurately reproduce these non-linear dynamics. Think about how the brain is constantly modifying itself, how a person can change their viewpoint during a conversation, depending on what you are saying and how you are saying it, depending on what mood they have or how much they slept last night. How a person can form totally new arguments based on this new viewpoint, refine them and reinterpret their previews thoughts. All parts of the brain are actually involved in this process, every single neuron in the brain is firing, every single axon is transmitting to produce this result. There aren't isolated parts that you can just take out and still have the same conversation with that person.

|

|

|

|

Raspberry Jam It In Me posted:Since the brain is a neural network with non-linear dynamics, even slight variations in the edge weights or the amount of edges can produce totally unpredictable results in the output and a simplified approximation of the network could never accurately reproduce these non-linear dynamics. That doesn't mean we can't achieve equivalent intelligence (observed externally) through alternative designs. Which is what Ford was getting at with the "bootstrapping from a bicameral mind architecture" explanation.

|

|

|

|

WarLocke posted:Yeah, I don't think Bernard is actually a robot, I just think the show is going out of the way to imply he's one as a red herring. I wonder if you get some kind of ratings warning before you take a quest (maybe the dead bodies?) or if there is a safe word/action to get off the ride or taken back into town. Like Teddy's storyline went from capturing bounties and banging whores to  pretty quickly. It'd seem like a pretty bad way to.end a trip scared shitless watching your buddy NPC get hacked to death not to mention that having frightened guests run off lost in the middle of the night also seems like a recipe for disaster. pretty quickly. It'd seem like a pretty bad way to.end a trip scared shitless watching your buddy NPC get hacked to death not to mention that having frightened guests run off lost in the middle of the night also seems like a recipe for disaster.

|

|

|

|

nexus6 posted:That makes thing a lot clearer. I guess the point I was trying to get at (and not articulate very well) was that some story loops appear to have very key events that often or always happen e.g. Dolores dropping a can. Exactly. If you hang out in the starter town often enough you will see Dolores drop that can multiple times. I think Logan's only been to the park once before and he was already bored with the drunk prospector popping up all the time. If you stay in that town for more than a few days the illusion starts to fade and you begin to see repetition, which is why they try to send you on quests as quickly as possible. Jack Gladney posted:Do you think anybody wanted to gently caress the hosts in the Wild Bill era, or was that more of a natural evolution? Also does anybody miss loving the hosts from the Wild Bill era? Why do you think Ford goes down to cold storage?

|

|

|

|

Kegslayer posted:I wonder if you get some kind of ratings warning before you take a quest (maybe the dead bodies?) or if there is a safe word/action to get off the ride or taken back into town. Well that lady was warned about what Wyatt's men were like. Then there is the hail of bullets at the tree of woe. Which is where the deputy took the one guest back to town. All before being back to back with Teddy and hosts that don't drop from gunfire. I think she had plenty of warnings and besides, Teddy gave her his knife. That'll get her back to town in one piece.  Krispy Kareem posted:I think Logan's only been to the park once before and he was already bored with the drunk prospector popping up all the time. "Good Evening!" *STABS*

|

|

|

|

Kegslayer posted:I wonder if you get some kind of ratings warning before you take a quest (maybe the dead bodies?) or if there is a safe word/action to get off the ride or taken back into town. You kind of see that happening in real-time, when the bandits start shooting at the group and the sheriff is all "You can go back to town if you want to, but I'm staying here and fighting". That's virtually a "Do you want to continue this quest? [Yes/No]" check right there. The two coward dudes are like 'gently caress this' so the black Host takes them back to town, but the woman is like "I got this" so the quest continues with her, the sheriff and Teddy.

|

|

|

|

kimbo305 posted:That doesn't mean we can't achieve equivalent intelligence (observed externally) through alternative designs. Yeah, that's what I'm saying. My point was that you need to achieve equivalent intelligence, otherwise, if you settle for less, it will be noticeable in SOME way.

|

|

|

|

quote:Do you think anyone wanted to gently caress the hosts when ______? Yes.

|

|

|

|

The Dave posted:Yes. More like quote:Do you think anyone wanted to gently caress the ______?

|

|

|

|

VendaGoat posted:All before being back to back with Teddy and hosts that don't drop from gunfire. I totally figured out why they didn't drop from gunfire. They discovered the ancient secret of invulnerable quest NPCs! Westworld, now with more hosts that can't be killed outside of their story line!

|

|

|

|

Krispy Kareem posted:Exactly. If you hang out in the starter town often enough you will see Dolores drop that can multiple times. Man, they had already established Logan as being a bit of a dick before they enter the park, but the way he kept wanting to rush William along was annoying. And yet as William pointed out, all Logan had done since they arrived was gently caress and drink and not wanting to explore. For all we know, the drunk's treasure is real, but since he's so annoying no one's ever taken him up on it. Drunk Eye Patch Guy is the key to the maze.

|

|

|

|

Blazing Ownager posted:I totally figured out why they didn't drop from gunfire. Well they did establish in dialogue before then that those guys were hopped up on crazy, thought they were already dead/in hell and didn't feel pain. But yeah there's so many MMO/game comparisons in the show and it tickles me every time one of them shows up.

|

|

|

|

Jack Gladney posted:Dolores is reset after she's killed. They have a death mode that they go into that tells somebody to retrieve and repair them, I think. Maybe she auto-resets if the raid on the ranch doesn't happen? According to that Dolores flow chart that was put up, that's exactly what happens. Unless a Guest takes her to the Ranch and chooses to not murder her family and rape her, I guess, at which point the flow says it's "Guest's choice" after she fixes them dinner, and that Teddy will back off immediately if someone is being nice to her in town. I have to say, that website has some of the coolest and most well thought out outside of show stuff I've seen. Most end up having nothing to do with the show/movie or end up a bunch of lame promotional material we already knew, but things like the flow loop actually work within the show, and are really pretty drat interesting.

|

|

|

|

VendaGoat posted:Clementine AKA Poor Man's Lady Gaga

|

|

|

|

mng posted:Man, they had already established Logan as being a bit of a dick before they enter the park, but the way he kept wanting to rush William along was annoying. And yet as William pointed out, all Logan had done since they arrived was gently caress and drink and not wanting to explore. You know I just realized there's a very key demographic missing from Westworld. We have the little kids, a bunch of them. We have the adults. But where are the snot nosed, dickheaded teenagers that want to carry 8 guns and grief everyone in town?

|

|

|

|

Raspberry Jam It In Me posted:Since the brain is a neural network with non-linear dynamics, even slight variations in the edge weights or the amount of edges can produce totally unpredictable results in the output and a simplified approximation of the network could never accurately reproduce these non-linear dynamics. That's a statement of faith, not of evidence. quote:Think about how the brain is constantly modifying itself, how a person can change their viewpoint during a conversation, depending on what you are saying and how you are saying it, depending on what mood they have or how much they slept last night. How a person can form totally new arguments based on this new viewpoint, refine them and reinterpret their previews thoughts. All parts of the brain are actually involved in this process, every single neuron in the brain is firing, every single axon is transmitting to produce this result. There aren't isolated parts that you can just take out and still have the same conversation with that person. Here was the original claim: quote:BUT: The way I understand it, the brilliance of the Turing test and why it is still relevant today is the fact that you can't fully imitate human intelligence without actually fully simulating human intelligence. There's so much baggage in that. What does "fully" mean? Is it just a synonym for "perfectly"? What's the difference between imitation and simulation, here? The statement sounds like a tautology: You can't perfectly simulate human intelligence without perfectly simulating human intelligence. Every single neuron in the brain is firing, but it's a pretty safe bet that not all those neurons have to do with *cognition*. There are neurons directing the lungs to exhale, to shape the vocal cords, to produce speech, there are neurons devoted to access and processing memories in order to produce language output, there are neurons that are just keeping the speaker standing upright and providing him with proprioception. Those are all part of this neural network with non-linear dynamics, do you suggest that simulating all of that is necessary to imitate human intelligence? If you can't imitate human intelligence without fully simulating all that, then you're effectively arguing that there can be no imitation without full emulation of the underlying *meat*.

|

|

|

|

mng posted:Man, they had already established Logan as being a bit of a dick before they enter the park, but the way he kept wanting to rush William along was annoying. And yet as William pointed out, all Logan had done since they arrived was gently caress and drink and not wanting to explore. The drunk's treasure is probably real. Or as real as you can get in a place that's charging you 40k a day to stay there. Maybe you'll discover the real treasure are the memories you create. Or there's a chest full of skee-ball tickets that you can trade in at the WestWorld gift shop.

|

|

|

|

mng posted:

I was going to say that it's now my goal to create a game with a skippable tutorial that has some key to an ultimate weapon later in the game, but it almost definitely already exists. The intersection of Disney's Frontierland with modern video games is such an interesting premise to run with that every tiny detail makes you think about it. It helps that they got the right writer, but I have to reluctantly give credit to Michael Crichton for one hell of a high concept.

|

|

|

|

Bicyclops posted:I was going to say that it's now my goal to create a game with a skippable tutorial that has some key to an ultimate weapon later in the game, but it almost definitely already exists. While trying to come up with an example of this (of which I'm also sure it exists), I was instead reminded of the old Sierra adventure games - several of which had 'gotcha' moments that would make the game unwinnable if you didn't know what to do going in, but the actual point at which you would hit a brick wall was hours and hours later. I'm probably misremembering this, but one of the King's Quest games had a cat chase a mouse across the screen when you entered one particular room. This only happened once, the first time you entered, and you were supposed to throw a boot at the cat to distract it. If you don't know to do this the cat catches the mouse and whatever thing the mouse does for you 5 hours later never happens so you lose. If you're too slow the cat catches the mouse and you lose. If you didn't think to keep the boot you lose. If you used the boot to solve an earlier puzzle, guess what you lose. drat the old Sierra games were pretty lovely.

|

|

|

|

mng posted:Man, they had already established Logan as being a bit of a dick before they enter the park, but the way he kept wanting to rush William along was annoying. And yet as William pointed out, all Logan had done since they arrived was gently caress and drink and not wanting to explore. You literally have to lift up his patch and reach into his eyehole in order to get the key that unlocks the door at the center of the maze.

|

|

|

|

THA TITTY THRILLER posted:You literally have to lift up his patch and reach into his eyehole in order to get the key that unlocks the door at the center of the maze. That sounds too easy. The Man in Black would easily think to do that, but in 30 years, has probably decided never to do his quest.

|

|

|

|

Kazy posted:Probably due to the fact that their tech is top-secret so they heavily regulate outside communication to deter corporate espionage or something. Again, I think you guys are trying too hard on this one. There were 3+ open phone terminals when he made that comment, and we haven't heard a single Delos employee complain about being disconnected. I'm pretty certain that line was to point out that he ~really~ wants to avoid talking to his (ex?-) wife and thinking about his dead kid. By the way: "we've cured all disease," except for whatever was wrong with BabyBernard?

|

|

|

|

Phanatic posted:That's a statement of faith, not of evidence. It a mathematical fact. Non linear dynamics can produce extremely complex and chaotic behaviour from very simple rules and such systems are very sensitive to the smallest perturbations. Take a look at the Wikipedia article, if you are interested https://en.m.wikipedia.org/wiki/Nonlinear_system quote:There's so much baggage in that. What does "fully" mean? Is it just a synonym for "perfectly"? What's the difference between imitation and simulation, here? The statement sounds like a tautology: You can't perfectly simulate human intelligence without perfectly simulating human intelligence. Fully means perfectly, yes. Like, there is no significant variation in how humans and machines behave in all conversations. Imitation means that you produce the same output as a human being, simulation means that you replicate the process that led to this output. Imitation doesn't necessarily mean simulation. A trivial case for this would be to just prerecord human responses to different situations and then reproduce them on command, without any understanding how to create novel response. It's an imitation of intelligence, but not a simulation. quote:Every single neuron in the brain is firing, but it's a pretty safe bet that not all those neurons have to do with *cognition*. There are neurons directing the lungs to exhale, to shape the vocal cords, to produce speech, there are neurons devoted to access and processing memories in order to produce language output, there are neurons that are just keeping the speaker standing upright and providing him with proprioception. Those are all part of this neural network with non-linear dynamics, do you suggest that simulating all of that is necessary to imitate human intelligence? If you can't imitate human intelligence without fully simulating all that, then you're effectively arguing that there can be no imitation without full emulation of the underlying *meat*. There is really no mind-body dichotomy, science has completely abandoned the whole idea a long time ago. You are meat, talking meat. Your breathing frequency regulates you heartbeat variance, your heartbeat variance regulates your conscious(attention, memory, etc.), your consciousness regulated your breathing frequency. It's all interconnect, it's all part of your thought process and decision making. I'm not saying that you can't imitate human intelligence. I'm just saying that to do a perfect imitation you need to implement all the complicated nonsense that goes on in a human, because it's all important and influencing us all the time. And even a small influence can have dramatic effect over longer time periods, due to the dynamics of neural nets.

|

|

|

|

Raspberry Jam It In Me posted:Yeah, that's what I'm saying. Right but again, like he said, "equivalent intelligence" doesn't require modelling based on the human brain or the same size or complexity as the human brain. A more efficient design could very well exist that achives the same end in less. Evolution is just a method of devising things based on mistakes, after all, as Ford keeps saying. Doesn't mean its the ideal solution. Raspberry Jam It In Me posted:It a mathematical fact. Non linear dynamics can produce extremely complex and chaotic behaviour from very simple rules and such systems are very sensitive to the smallest perturbations. Take a look at the Wikipedia article, if you are interested But they're not necessarily trying to make perfect robot clones of humans as they function in biology. The whole point is that as long as they're truly sentient and intelligent, that's enough. It doesn't have to be dendrites all the way down. The whole "smallest perturbations" thing doesn't apply to this situation at all. Unless you're trying to build a robot version of a living human and exactly model THEIR brain processes. Just creating intelligent beings doesn't have that requirement. Raspberry Jam It In Me posted:Imitation means that you produce the same output as a human being, simulation means that you replicate the process that led to this output. Imitation doesn't necessarily mean simulation. A trivial case for this would be to just prerecord human responses to different situations and then reproduce them on command, without any understanding how to create novel response. It's an imitation of intelligence, but not a simulation. Rather than simulate a human brain all you have to do is create something intelligent on its own. There's imitation of awareness and there's real awarenes and then there's simulation of human awareness; those are 3 different things. You're forcing a false dichotomy here. Rather than simulate humans, another thing you can do is create a new lifeform. As long as its intelligent that's enough. It doesn't have to be biologically identical to human brain function. The AI can look like humans without actually working exactly like humans do. Simulation is one way, but not the only way to AI. Zaphod42 fucked around with this message at 20:58 on Oct 19, 2016 |

|

|

|

I was thinking about Delores' gun. I hadn't noticed she took it from Trevor in barn. I was convinced that there was a subconscious string in Delores' mind that was trying to get that gun to her in the barn so she could defend herself and break the loop. Day after day, it was willing her to clandestinely move it closer to the critical spot so she could live. But if she just took it from Trevor, I suppose it isn't the case. Though, if we assume her 'flashbacks' are previous scenarios playing back in her mind, like a super-deja-vu, then we assume that she has shot Trevor before. She had a flashback of her escaping the barn, and then getting shot by another bandit, and used that to avoid the same fate this time. I'm guessing the gun in the dirt and in her drawer were from a failed attempt that she somehow hid? I figure the park keeps inventory of that stuff, but maybe they could assume a guest used it and kept the gun(s) - and didn't worry because Delores 'cannot' fire one. I dunno. And I'm at work, so I can't double-check - I could be missing obvious inconsistencies.

|

|

|

|

Raspberry Jam It In Me posted:It a mathematical fact. Non linear dynamics can produce extremely complex and chaotic behaviour from very simple rules and such systems are very sensitive to the smallest perturbations. Take a look at the Wikipedia article, if you are interested Not all nonlinear systems are chaotic. There are all kinds of nonlinear systems which can be solved perfectly. Going from "The brain is a non-linear neutral network" to what you arrived at isn't a mathematical fact, it's not even a consensus. https://en.m.wikipedia.org/wiki/Nonlinear_system I'm aware of what a nonlinear system is, thanks. quote:Fully means perfectly, yes. Like, there is no significant variation in how humans and machines behave in all conversations. I disagree that that would be imitation; I mean, if it's imitation it's a pretty crappy form of imitation that's utterly trivial to discern from actual cognition. But I think I see the distinction you're making. Are you a Searle fan, by the way? quote:There is really no mind-body dichotomy, science has completely abandoned the whole idea a long time ago. You are meat, talking meat. Of course I am. But the suggestion that the mind cannot be emulated without duplicating the underlying physical physical substrate is something that nobody but Penrose actually believes. quote:Your breathing frequency regulates you heartbeat variance, your heartbeat variance regulates your conscious(attention, memory, etc.), your consciousness regulated your breathing frequency. It's all interconnect, it's all part of your thought process and decision making. So you *are* saying here that you'd need to build something that is a physical functional duplicate of the human brain in order to run a human-level intelligence? Phanatic fucked around with this message at 21:09 on Oct 19, 2016 |

|

|

|

VendaGoat posted:Clementine Is this her, or a random teenager at a mall

|

|

|

|

Jack Gladney posted:I would imagine that just like in actual meat machines like us consciousness is an emergent property of all of the parallel processes that allow the hosts to know and navigate the world. Certainly "consciousness" in any appreciably humanlike way. I suspect the way we think about advanced consciousness is like the way we approach habitable planets...we have a sample size of exactly 1 time either thing has happened, so it narrows our perspective and that's all we look for. Our version of consciousness is embodied subjectivity inside a meat-machine that contextualizes sensation through emotion and what-not. Maybe Google is "conscious," but it doesn't do any of those things so we might never notice.

|

|

|

|

King Vidiot posted:AKA Poor Man's Lady Gaga If she's the poor man's version I've never been happier to be destitute

|

|

|

|

Raspberry Jam It In Me posted:It a mathematical fact. Non linear dynamics can produce extremely complex and chaotic behaviour from very simple rules and such systems are very sensitive to the smallest perturbations. Take a look at the Wikipedia article, if you are interested This sounds like the Chinese Room. quote:

You still seem to be arguing that to create the perfect human imitation, you need to re-create *Consciousness is also ill-defined. How do you know the person next to you is conscious without being able to read their minds? How do you know your dog is conscious?

|

|

|

|

I looked up the bicameral mind because I was curious, and the book that originated the idea was published in 1976; the original Westworld movie came out in 1973. Obviously, the TV show does a lot of things differently and draws on different sources for its inspiration (I think it's more than just a coincidence that the hosts seem to resemble NPCs in a video game RPG), but I find it fascinating that this relatively new philosophy motivates the retelling of an earlier work.

|

|

|

|

Skizzzer posted:*Consciousness is also ill-defined. How do you know the person next to you is conscious without being able to read their minds? How do you know your dog is conscious? This is the exact issue. "Consciousness" itself isn't really a testable or demonstrable phenomenon, just a concept we extrapolate from certain behaviors we observe in others. The whole simulation/imitation argument hits a wall at that point...we can conceive of a machine that appears conscious, but the lived experience of that machine is still totally inscrutable. It's more about philosophy than science, is the problem. There's no material of consciousness, no way to measure it or prove its existence within the framework of scientific method. It makes the idea of a scientist or engineer "programming" consciousness slightly absurd, because we can barely describe the phenomenon in ourselves.

|

|

|

|

Its important to remember that in Turing's time most people were questioning if machines could even think, which we now kinda take for a given, since they're so much more vastly complex than back then. They had a hard time with a machine testing inputs to find a matching set of variables, where today we have personal assistants that listen to us and process language and manage our lives. They're not AI yet obviously, but its much more accepted that they will eventually be intelligent than was back then. So he set a low bar just as a proof of concept, to explore the idea of a machine replicating the behavior of a human.404notfound posted:I looked up the bicameral mind because I was curious, and the book that originated the idea was published in 1976; the original Westworld movie came out in 1973. Obviously, the TV show does a lot of things differently and draws on different sources for its inspiration (I think it's more than just a coincidence that the hosts seem to resemble NPCs in a video game RPG), but I find it fascinating that this relatively new philosophy motivates the retelling of an earlier work. Yeah it is a pretty new concept. Everybody was really in love with it for awhile, I discovered it in college probably like a lot of goons in this thread and had a lot of fun considering the possibilities and the way of approaching intelligence it provided. Its been more or less debunked as how things actually happened, like Bernard rightly points out in the show, but its still a very fun theory and concept. I said it before and I'll say it again; they've got an engineer or a psychologist on the writing team, or both. This show is really smart. Xealot posted:It's more about philosophy than science, is the problem. Bingo.

|

|

|

|

King Vidiot posted:AKA Poor Man's Lady Gaga I wouldn't say no to either.

|

|

|

|

The hosts are clearly self aware, we're supposed to be sympathetic to the tragedy of their existence and consider the way the guests treat them as cruel and inhumane, Dolores is not becoming self aware, she was that the whole time, she is rising above the arbitrary constraints that are enforced upon her, breaking her shackles if you will. I felt like the ethical\philosophical stance the show was taking on this was rather unambiguous, the greeter from the second episode even asks William McPoyle what's the difference between a host and a human if he can't tell the difference.

|

|

|

|

Xealot posted:This is the exact issue. "Consciousness" itself isn't really a testable or demonstrable phenomenon, just a concept we extrapolate from certain behaviors we observe in others. The whole simulation/imitation argument hits a wall at that point...we can conceive of a machine that appears conscious, but the lived experience of that machine is still totally inscrutable. Yes, absolutely. What I find interesting about consciousness is that it feels intuitively there. I believe that I'm conscious because I'm me and I'm privy to everything I feel; I attribute consciousness to other humans because they look like me, therefore, they're probably conscious too; and I attribute consciousness to my pets because I see how human-like they can be. I can't, however, prove any of this and so in a way arguing about consciousness feels like arguing about the existence of souls and in some ways, it's not just about philosophy - it's about religion.

|

|

|

|

Skizzzer posted:Yes, absolutely. What I find interesting about consciousness is that it feels intuitively there. I believe that I'm conscious because I'm me and I'm privy to everything I feel; I attribute consciousness to other humans because they look like me, therefore, they're probably conscious too; and I attribute consciousness to my pets because I see how human-like they can be. I can't, however, prove any of this and so in a way arguing about consciousness feels like arguing about the existence of souls and in some ways, it's not just about philosophy - it's about religion. Cogito ergo sum But I want you to realize something. You could very well be the only real human being with consciousness and all of us, no matter how real we seem, could just be mannequins, that are programed to respond only to you. When you are not around, we could all shut off and not do anything. You could be the only real person on Earth.

|

|

|

|

|

| # ? Apr 27, 2024 16:53 |

|

Delores posted:

|

|

|