|

To put the alleged TDP specs in perspective (per Wikipedia): 175W - 2060 Super, 2070 215W - 2070 Super, 2080 250W - 2080 Super, 2080 Ti 280-320W - Titan RTX

|

|

|

|

|

| # ? Jun 13, 2024 02:54 |

|

Oof. I'm afraid the 3080 might be out of my budget at this point  I want to replace my 1080, I guess a 3070 would be more than enough for 1440p, but still... I'd have liked to get a 3080 anyway in order to just slam everything on Ultra for the next few years without worrying

|

|

|

|

I mean in practice, the cards often jump beyond their rated TDP so we might see the 3090 hit 400W at load

|

|

|

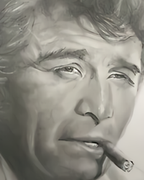

Rollie Fingers posted:Only 10GB of memory on the 3080 is incredibly cynical from Nvidia. Sigh. How do you think the CEO can afford so many stylish leather jackets?

|

|

|

|

|

The 3070 really seems like the dividing line between "this is reasonable" and LMAO WTF BBQ level stuff. RTX 2080 Super: $699 MSRP, 250W TDP RTX 3070: $599 MSRP*, 220W TDP*** and speed between RTX 2080 Super and RTX 2080 Ti*** * Allegedly ** Also Allegedly *** Also Also Allegedly

|

|

|

|

Rollie Fingers posted:Only 10GB of memory on the 3080 is incredibly cynical from Nvidia. Sigh. shrike82 posted:And they�ve also confirmed the 20GB 3080. i think you'll be ok unless you have an urgent need sean10mm posted:The 3070 really seems like the dividing line between "this is reasonable" and LMAO WTF BBQ level stuff. yeah, to me, based on what i've seen, this makes sense. the 3070 will most likely be performing towards the upper end of where turing did

|

|

|

|

Rollie Fingers posted:Only 10GB of memory on the 3080 is incredibly cynical from Nvidia. Sigh. if you really need to use a beefy card using pro software, buying a Quadro shouldn't be a problem lest something is very wrong with your business.

|

|

|

|

I got my 2080S for $625 a 7 months ago. Not that the 3070 isn't a better value but it'd be nicer if that was more like $500. Maybe the AIB boards will be a little more under $600. Nvidia can charge whatever they want, this isn't insulin, but it'd be nicer to see a bigger jump on new generations.

|

|

|

|

I mean value for gpus is usually a curve and generally the closer you want to be to the highest possible absolute performance the more each marginal performance increase is going to cost you. If you look at the good value cards this generation, it's the 1650 super, the 1660 super, and the 5700xt. If you're willing to not mindlessly crank every setting to max, you can get a very good 1080p60-1440p144 experience from these cards. People in the gpu thread are probably just a self selecting pool of folks willing to spend dumb amounts of money on a gpu. Edit: and if you care about value you also don't upgrade every generation.

|

|

|

|

Lockback posted:I got my 2080S for $625 a 7 months ago. Not that the 3070 isn't a better value but it'd be nicer if that was more like $500. Maybe the AIB boards will be a little more under $600. I think compared to a 2080S for $625 a 3070 for any amount less represents a pretty good generational leap 3070 @ 220W would put it at theoretically less power consumption than a 2080Super for performance they're slotting as in between a 2080S and 2080ti E: now prorate that theoretical performance and what it would have cost last generation and im very ok with what i'm hearing Worf fucked around with this message at 14:54 on Aug 28, 2020 |

|

|

|

sauer kraut posted:if you really need to use a beefy card using pro software, buying a Quadro shouldn't be a problem lest something is very wrong with your business. My company's workstations do have Quadros but I've also been doing a bit of freelance work in my spare time. With the disruption to film production and VFX companies around the world having mass layoffs as a result, my freelance work could be my only work in a few months. The 11GB on my 1080Ti is already feeling inadequate given I'm now working with 4k textures and painting layers and layers of 8k floating point textures and procedural maps :/ I wanted to avoid the Quadros out of principle more than anything. The RTX 6000 is a 2080ti with 24GB of memory and it costs 300% more... Statutory Ape posted:i think you'll be ok unless you have an urgent need That would be good news. Hopefully it's closer in price to the standard 3080 than the 3090. Rollie Fingers fucked around with this message at 15:01 on Aug 28, 2020 |

|

|

|

Rollie Fingers posted:Only 10GB of memory on the 3080 is incredibly cynical from Nvidia. Sigh. isn't the 3090 basically what you want? 24gb of vram at consumer-ish prices? it won't be cheap but it'll probably still be less than half the cost of a 24gb quadro lol

|

|

|

|

The 3090 has 20% more CUDA cores than the 3080. The 2080 Ti has 50% more cores than the 2080. Makes you wonder what they're doing to get a respectable performance delta with the 3090 and 3080.

|

|

|

|

shrike82 posted:Makes you wonder what they're doing to get a respectable performance delta with the 3090 and 3080. at least on the FE versions the 3090 might have higher sustained boost clocks due to the much beefier cooler

|

|

|

|

DrDork posted:Yeah, but now you're talking about a motherboard that's only half-way to ATX12VO as well as a PSU that's only half-way to ATX12VO, and would need additional plugs to carry 5v, so you can't just use the ATX12VO motherboard connector. I know, I was just going out on a tangent really. Although I'd be up for a beefy 5v standby rail to power all USB - it means you could charge phones without the board being powered up, for example. But that's just a side idea

|

|

|

|

repiv posted:isn't the 3090 basically what you want? 24gb of vram at consumer-ish prices? I could end up with that if the reviews are positive. The leaks over the last couple of weeks have just made me wary over its power consumption and heat output.

|

|

|

|

shrike82 posted:The 3090 has 20% more CUDA cores than the 3080. Well the 2080 Ti was only about 30% faster than the 2080 despite having 50% more cores. So they could have just made Ampere scale higher at extreme core counts better than Turing did.

|

|

|

|

I don't know how rendering compute scales with GPU/VRAM but you might be better off getting 2x 3080 or even getting another 1080Ti to stack, versus a single card with more VRAM.

|

|

|

|

shrike82 posted:I don't know how rendering compute scales with GPU/VRAM but you might be better off getting 2x 3080 or even getting another 1080Ti to stack, versus a single card with more VRAM. Certain renderers like Arnold can memory pool, but unfortunately one software I use has no plans to introduce multi GPU support (even though it needs it more than any other) and the other one is buggy as poo poo with NVLink.

|

|

|

|

Someone said earlier in the thread that only Quadro cards have the right Nvlink support to memory pool.

|

|

|

|

shrike82 posted:I mean in practice, the cards often jump beyond their rated TDP so we might see the 3090 hit 400W at load This is impossible though, right? Since the PCI-E Slot + two 8 pin connectors can only push a maximum of 375W. (75+150+150)

|

|

|

|

AirRaid posted:This is impossible though, right? Since the PCI-E Slot + two 8 pin connectors can only push a maximum of 375W. (75+150+150) The FE cards don't use standard PCIe power connectors, and we've already seen one leaked AIB board with 3x 8pins (525W max)

|

|

|

|

Shipon posted:curses that i bought a gsync monitor instead of a gsync compatible freesync monitor so i'm locked into nvidia at this point I have a freesync monitor. I was under the impression they weren't compatible with NVidia. Is that not the case?

|

|

|

|

Statutory Ape posted:I think the 970 is remembered fondly because despite the fact it was kind of hosed, it kept people running games at decent enough settings on their 1080p screens. A minor amount of games and settings would challenge the cards available VRAM, and the card outside of VRAM is competitive with midrange pascal and low end turing for performance. How was the 970 hosed? I guess it doesn't matter now as I've been using the card for something like 4 years. It has worked very well up until quite recently. It seemed to me like it hit a pretty amazing price and performance. I can't recall the last time I've kept a video card as long as I have! Having said that, I'm quite excited to upgrade to a 3xxx. Edit: I'm excited to finally play Metro and Control whenever these cards come out. I upgraded my PC in April and the video card is the final piece for a new system! Vasler fucked around with this message at 15:49 on Aug 28, 2020 |

|

|

|

axeil posted:I have a freesync monitor. I was under the impression they weren't compatible with NVidia. Is that not the case? Depends. https://www.nvidia.com/en-us/geforce/products/g-sync-monitors/specs/

|

|

|

|

Vasler posted:How was the 970 hosed? I guess it doesn't matter now as I've been using the card for something like 4 years. It has worked very well up until quite recently. It seemed to me like it hit a pretty amazing price and performance. Misleading/false marketing regarding RAM, the last .5gb wasn't nearly as fast as the rest of the pool. It didn't really matter since you could 1080p on it just fine anyways, but everyone got like

|

|

|

|

Vasler posted:How was the 970 hosed? It effectively has 3.5GB memory instead of 4GB. Which hasn't been much trouble for me even at 1440p but I don't usually play terribly demanding games. I am really looking forward to hopefully upgrading to a 3060 or 3070 though!

|

|

|

|

axeil posted:I have a freesync monitor. I was under the impression they weren't compatible with NVidia. Is that not the case? You need a 10 series card or newer

|

|

|

|

Vasler posted:How was the 970 hosed? I guess it doesn't matter now as I've been using the card for something like 4 years. It has worked very well up until quite recently. It seemed to me like it hit a pretty amazing price and performance. https://wccftech.com/nvidia-geforce...l%20at%203.5GB.  so its kind of hosed but, regardless of that, it was/is still a good card e; i never owned a 970 but i appreciate how performant and affordable it is, especially in the context of an industry with no real competition. grabbing a used 970 for easy enough money probably powered a lot of people into a lot of games for cheap. especially during the dark mining times Worf fucked around with this message at 15:57 on Aug 28, 2020 |

|

|

|

shrike82 posted:The 3090 has 20% more CUDA cores than the 3080. Going by those leaked specs earlier the 3090 also has a gigantic memory bandwidth advantage. It has relatively a bit more bandwidth per core than the 3080 does, so that probably helps.

|

|

|

|

Gwaihir posted:Going by those leaked specs earlier the 3090 also has a gigantic memory bandwidth advantage. It has relatively a bit more bandwidth per core than the 3080 does, so that probably helps. 20% between the 3090 and 3080, 40% between the 2080 Ti and 2080. The benchmarks are going to be interesting.

|

|

|

|

Yeah, absolutely. There's so much weird and different stuff going on right now with this generation that I'm sure the tech sites are having a field day.

|

|

|

|

Statutory Ape posted:https://wccftech.com/nvidia-geforce...l%20at%203.5GB. Yup, the 970 might have had a memory issue, but it doesn't take away from the fact that it was a great deal, and we recommended an assload of them on these fine forums. We should be so lucky to see the 3070 at $329. Hah!

|

|

|

|

So is that 20% performance difference between 3080 and 3090 big enough to justify getting one? My goal is to run current and future games at 1440p with everything set to ultra. If the difference between 3080 and 3090 is simply 130 FPS vs 144 then I don�t need the 3090.

|

|

|

|

So how many more weeks or months will AMD be stalling leaks and news? Will they openly allow losing the 3070 and 3080 target group? Because they will if the rumors are true they won�t launch new AMD GPUs before November. That�s like 2 months of Nvidia selling next gen GPUs without any competition. Mr.PayDay fucked around with this message at 16:38 on Aug 28, 2020 |

|

|

|

Kraftwerk posted:So is that 20% performance difference between 3080 and 3090 big enough to justify getting one? I claim the 3090 will be at least 30 % faster with RTX off and 50% faster with RTX on than the 3080. Otherwise it might be DOA for 600 Euro/Dollar �fee� for just more VRAM compared to the 3080.

|

|

|

|

Kraftwerk posted:So is that 20% performance difference between 3080 and 3090 big enough to justify getting one? No one knows and the 20% is based purely off of CUDA core counts, we don't know clock rates, RT core counts/speeds, tensor core counts/speeds, or how all of that interplays with memory bandwidth on a new architecture and process. We'll know on Tuesday

|

|

|

|

Mr.PayDay posted:I claim the 3090 will be at least 30 % faster with RTX off and 50% faster with RTX on than the 3080. It won't be DOA. As long as it's The Best (tm), the people who would even consider buying it in the first place will buy it. Price/performance doesn't mean poo poo if you have 1400 bucks lying around for a GPU.

|

|

|

|

Rollie Fingers posted:Only 10GB of memory on the 3080 is incredibly cynical from Nvidia. Sigh. Cynical? Eh. I'm a little surprised they didn't at least stick with 11GB to match the 2080Ti, but there was never a reasonable expectation that they were going to slap 14 or 16GB on there as a baseline--the VRAM is very expensive, and that much just isn't needed 95% of the time. It sucks for people who wanted to do Quaddro-style work without paying Quaddro-style prices, but it would suck more to saddle everyone else with an even more expensive card. Beautiful Ninja posted:It won't be DOA. As long as it's The Best (tm), the people who would even consider buying it in the first place will buy it. Price/performance doesn't mean poo poo if you have 1400 bucks lying around for a GPU. Yeah, people still bought RTX Titans for $2500 despite not being convincingly faster than a 2080Ti at half the price. Some people will buy it just for the extra VRAM, and others will buy it because they have $$$ to burn and want that extra performance. NVidia's halo products have never been a good price:performance ratio, that's why they're halo products.

|

|

|

|

|

| # ? Jun 13, 2024 02:54 |

|

sean10mm posted:To put the alleged TDP specs in perspective (per Wikipedia): I have a 750 Watt Power supply with a Ryzen 3900X. I'm guessing I'm still good for grabbing any of the cards being announced?

|

|

|