|

they are clearly trying to drive the cost down by reusing training material across model versions though i wonder how often it gets updated actually, if at all? does old stuff get pulled, or does new stuff just get added?

|

|

|

|

|

| # ? Apr 27, 2024 17:04 |

|

we're not talkin data collection, we are literally talking millions in compute from running gpu. both training and vicious inference costs they scaled to 32k tokens by jacking up the O(n^2) token attention thing without algorithmic improvements, for example. you can tell this cuz they jacked up the price by O(n^2) lol bob dobbs is dead fucked around with this message at 21:50 on Mar 30, 2023 |

|

|

|

pseudorandom name posted:they don�t care, they�re venture capitalist scum and they�re gambling that it�ll work and they�ll get away with it and the profits will be astronomical The only profitable use case for this tech is separating vcs from their money

|

|

|

|

i highly doubt any of the current models pays for their inference (use) cost, much less any other aspect of their development. but then the current applications are dumb tech demos.

|

|

|

|

i would absolutely believe that openais compute bill is above a billion. maybe above a billion per quarter. so costs gotta be defrayed still. amazon is brutally incompetent at this and theyre still prolly the ones who are gonna make all the money. and azure too ofc bob dobbs is dead fucked around with this message at 22:15 on Mar 30, 2023 |

|

|

|

isn't it all custom chips now

|

|

|

|

yeah, rented asics but they still fuckin cost

|

|

|

|

kid: mom i want some adidas mom: we got adidas at home adidas at home: bob dobbs is dead posted:yeah, rented asics

|

|

|

|

can you run LLMs on the �ML accelerator� that increasing numbers of cpus and socs come with these days

|

|

|

|

it is always straightforward to compress nets by 5 to 8 orders of magnitude as postprocessing step. it wrecks a lotta good stuff for the generative crap tho same w attention crap. i know of like 4 O(n log n) scaling sparse attention dealios, they just dont use em cuz theyre all a bit crap bob dobbs is dead fucked around with this message at 22:12 on Mar 30, 2023 |

|

|

|

i don't think asics and details of the raw compute matters that much for inference, in that i'd be kind of surprised if e.g. bing chat didn't do upwards of 1 terabyte worth of touches in very expensive memory per word of output.

|

|

|

|

haveblue posted:can you run LLMs on the �ML accelerator� that increasing numbers of cpus and socs come with these days when i first got my new laptop that had one of those i was like "oh can i run dall-e on that, it'd be fun to run the bigger/better model locally just to play around with" and the answer at the time was "lol no" i think they were working on it or something but idk i stopped caring around that point

|

|

|

|

its yak shaving, not fundamental problems

|

|

|

|

Cybernetic Vermin posted:i don't think asics and details of the raw compute matters that much for inference, in that i'd be kind of surprised if e.g. bing chat didn't do upwards of 1 terabyte worth of touches in very expensive memory per word of output. that's what i was thinking too but i don't know enough about how these work to say for sure. if it's essentially a fancy lookup routine it probably uses a ton of memory but i don't see why it would necessitate a lot of gpu compute, especially if we're talking text

|

|

|

|

its a buncha matrix multiplies that dominate

|

|

|

|

having been in professional services for the past decade+ it was probably once a year where i'd be working with a client who was trying to build some ai bullshit. every single one of them was a colossal waste of time and money that would have been saved if the brain genius behind it had just done some basic research on what the state of the art was actually capable of at the time that being said we've been internally testing a chat bot running gpt-4 that's just built to take customer questions about our stuff and give answers plus citations and i gotta say, it's pretty drat good. it's gotten a few wobbly responses but when it did it would then call out that our documentation was 'ambiguous' in the next sentence and in every case so far it's been because there was a gap in our documentation. as someone said earlier itt this is the area i could see this thing being really useful. it's not going to save us any money because we're a small company and can't afford to have someone sitting around answering these small questions however it WILL make the customer experience way better in both them being able to find answers more quickly and also helping us find gaps in our documentation big companies that employ an army of tier 1 support are absolutely scheduling an appointment with their local slaughterhouse though

|

|

|

|

i cannot wait for these idiot things to be the only method of interaction with companies that refuse to staff a support line or email, and simply refer you to their absolutely useless faq for support

|

|

|

|

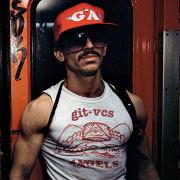

PIZZA.BAT posted:having been in professional services for the past decade+ it was probably once a year where i'd be working with a client who was trying to build some ai bullshit. every single one of them was a colossal waste of time and money that would have been saved if the brain genius behind it had just done some basic research on what the state of the art was actually capable of at the time someone is going to do this poo poo to your support AI and then follow that up by having sex with it:

|

|

|

|

the other real useful case is having something that can spit out a bunch of almost working code from a prompt. a friend and i were playing around with it a month ago and we had it write us a discord bot that could take requests for music to build a playlist and stream it to us from youtube. all we had to do was register and paste in some api keys and it worked fine was it buggy? absolutely will idiots use this and get themselves into tons of trouble because the ai makes really dumb mistakes? absolutely however having something that can get you past all the dumb scaffolding bullshit and straight to the meat of what you want to build is really nice. i'll probably be using it any time i'm starting something from scratch

|

|

|

|

Shame Boy posted:someone is going to do this poo poo to your support AI and then follow that up by having sex with it: idgaf as long as they renew their contract next quarter

|

|

|

|

hell, i feel like any time i encounter a GPT-driven chat bot a company has put in between me and an actual person i will immediately try to have sex with it, just on principle

|

|

|

|

ok but have you gotten nude tayne from it yet?

|

|

|

|

PIZZA.BAT posted:the other real useful case is having something that can spit out a bunch of almost working code from a prompt. a friend and i were playing around with it a month ago and we had it write us a discord bot that could take requests for music to build a playlist and stream it to us from youtube. all we had to do was register and paste in some api keys and it worked fine this is an amazing way to build trivial exploitable systems with slightly less effort than just bodging the top five results from stack overflow into your IDE and hitting compile

|

|

|

|

i dont understand the benefit of using chatgpt to write scaffolding over just forking the trivial example project or using existing generators, or copy-paste from the getting started docs, or wahtever. there's always something that basically does what you want that doesn't involve playing mother-may-i with knockoff eliza

|

|

|

|

Shame Boy posted:hell, i feel like any time i encounter a GPT-driven chat bot a company has put in between me and an actual person i will immediately try to have sex with it, just on principle

|

|

|

|

originally had  there but that implication is even worse there but that implication is even worse

|

|

|

|

furries trying to gently caress tony the tiger got kelogg to seriously re-think their social media presence, i'm just applying that logic to everything

|

|

|

|

Shame Boy posted:furries trying to gently caress tony the tiger got kelogg to seriously re-think their social media presence, i'm just applying that logic to everything i mean, not sure "i'm gonna rape this thing to assert my dominance" is something you wanna be applying universally

|

|

|

|

Beeftweeter posted:i mean, not sure "i'm gonna rape this thing to assert my dominance" is something you wanna be applying universally whoa whoa whoa buddy, shame boy was talkin about _consensually_ fuckin' the cereal mascot and/or probability matrix i hope

|

|

|

|

outright explicit sex is like the one thing Brands are still afraid of being associated with and you will not take this away from me dammit

|

|

|

|

i wonder what's going to happen to the lag time between "this cutting-edge llm runs on insanely expensive servers and must take place offsite" and "this llm has been crunched down to run on a current-gen apple silicon mac" over the next few years

|

|

|

|

Achmed Jones posted:whoa whoa whoa buddy, shame boy was talkin about _consensually_ fuckin' the cereal mascot and/or probability matrix i personally would not gently caress the tony the tiger twitter account and you can quote me on that the math robot, on the other hand,

|

|

|

|

Internet Janitor posted:i wonder what's going to happen to the lag time between "this cutting-edge llm runs on insanely expensive servers and must take place offsite" and "this llm has been crunched down to run on a current-gen apple silicon mac" over the next few years these things get a ton of press (whether its good or bad doesn't really matter), so i wouldn't be surprised if a lot of companies drop everything to start working on LLMs, apple included. meta and google already announced a bunch of poo poo of course but they're probably pouring resources into them now

|

|

|

|

Beeftweeter posted:these things get a ton of press (whether its good or bad doesn't really matter), so i wouldn't be surprised if a lot of companies drop everything to start working on LLMs, apple included. meta and google already announced a bunch of poo poo of course but they're probably pouring resources into them now

|

|

|

|

Jonny 290 posted:kid: mom i want some adidas

|

|

|

|

pseudorandom name posted:massive intellectual property theft on an unprecedented scale it really is balls that we live in a world where the courts determined that <10s samples were not fair use, kneecapping creativity in hip hop (unless you could afford an army of people just to determine rightsholders before you even drew up the contracts to pay them) but the AI mystery meat variety is okay because it'll be a good few decades before lawyers can even understand it, and even then it'll be nigh-impossible to prove infringement anyway on the other hand, maybe at least it's a small "gently caress you" to the relentless extension of copyright by the american entertainment industry

|

|

|

|

the lawsuits are literally in court right now who knows if they will hand down something not foolish but you cant pretend theyre not in court right now

|

|

|

|

don't tell me what i can and can't pretend bob

|

|

|

|

bob dobbs is dead posted:the lawsuits are literally in court right now It'll be interesting to see what the outcome is but I wouldn't be surprised if a set of neutral network weights qualifies as fair use, given that Google image search was fair use when its product actually contains the images in question.

|

|

|

|

|

| # ? Apr 27, 2024 17:04 |

|

4lokos basilisk posted:wait its possible to have a day job thats optimizing and getting rid of unnecessary cloud crap **and** it has management buy in? i work at aws and even we advocate people do this

|

|

|